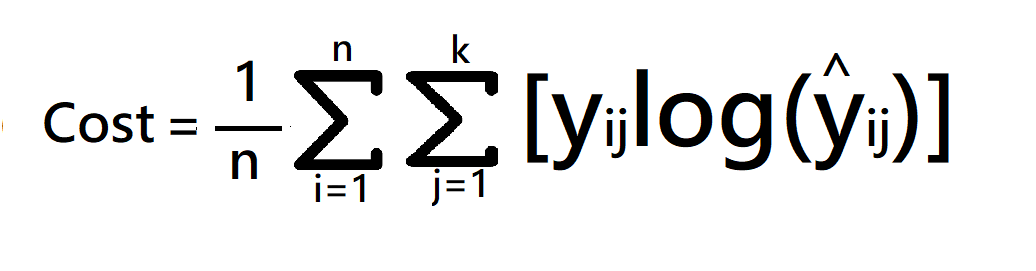

The cost is the Meanof these Absolute Errors (MAE): Absolute Error is also known as the L1 loss: Absolute Error LossĪbsolute Error for each training example is the distance between the predicted and the actual values, irrespective of the sign. Therefore, it should not be used if our data is prone to many outliers. This property makes the MSE cost function less robust to outliers. Squaring a large quantity makes it even larger, right? But there’s a caveat. The MSE loss function penalizes the model for making large errors by squaring them. Mse2 = mean_squared_error(y_true, y_pred) Mse1 = mean_squared_error(y_true, y_pred)įrom trics import mean_squared_error Mean_square_error = 1.0 / len(actual) * sum_square_error Sum_square_error += (actual - predicted) ** 2.0 The loss value is minimized, although it can be used in a maximization optimization process by making the score negative.ĭef mean_squared_error(actual, predicted): The result is always positive regardless of the sign of the predicted and actual values and a perfect value is 0.0. Mean Squared Error loss, or MSE for short, is calculated as the average of the squared differences between the predicted and actual values. Machine Learning/Deep Learning Loss Functions Regression Loss Functions Mean Squared Error Loss Loss Function: Cross-Entropy, also referred to as Logarithmic loss.Output Layer Configuration: One node for each class using the softmax activation function.The problem is framed as predicting the likelihood of an example belonging to each class. Loss Function: Cross-Entropy, also referred to as Logarithmic los s.Ī problem where you classify an example as belonging to one of more than two classes.Output Layer Configuration: One node with a sigmoid activation unit.the class that you assign the integer value 1, whereas the other class is assigned the value 0. The problem is framed as predicting the likelihood of an example belonging to class one, e.g. Loss Function: Mean Squared Error (MSE).Ī problem where you classify an example as belonging to one of two classes.Output Layer Configuration: One node with a linear activation unit.179, Deep Learning, 2016 Machine Learning/Deep Learning Problems Regression ProblemĪ problem where you predict a real-value quantity. This means that the cost function is described as the cross-entropy between the training data and the model distribution. Most modern neural networks are trained using maximum likelihood. 156, Neural Smithing: Supervised Learning in Feedforward Artificial Neural Networks, 1999 The mean squared error is popular for function approximation (regression) problems The cross-entropy error function is often used for classification problems when outputs are interpreted as probabilities of membership in an indicated class. The optimization strategies aim at minimizing the cost function.Ī few basic functions are very commonly used. A cost function, on the other hand, is the average loss over the entire training dataset. It is also sometimes called an error function. , Deep Learning, 2016Ī loss function is for a single training example. When we are minimizing it, we may also call it the cost function, loss function, or error function. The function we want to minimize or maximize is called the objective function or criterion.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed